What is the best way to pass the Microsoft DP-200 exam? (First: Exam practice test, Second: leads4pass Microsoft expert.) You can get free Microsoft other Certification DP-200 exam practice test questions here.

Or choose https://www.leads4pass.com/microsoft-other-certification.html Study hard to pass the exam easily!

Table of Contents:

- Latest Microsoft other Certification DP-200 google drive

- Effective Microsoft DP-200 exam practice questions

- Related DP-200 Popular Exam resources

- leads4pass Year-round Discount Code

- What are the advantages of leads4pass?

Latest Microsoft other Certification DP-200 google drive

[PDF] Free Microsoft other Certification DP-200 pdf dumps download from Google Drive: https://drive.google.com/open?id=1H70200WCZAc8N43RdlP4JVsNXdOm0D2U

Exam DP-200: Implementing an Azure Data Solution:https://docs.microsoft.com/en-us/learn/certifications/exams/dp-200

Candidates for this exam are Microsoft Azure data engineers who collaborate with business stakeholders to identify and meet the data requirements to implement data solutions that use Azure data services.

Azure data engineers are responsible for data-related tasks that include provisioning data storage services, ingesting streaming and batch data, transforming data, implementing security requirements, implementing data retention policies, identifying performance bottlenecks, and accessing external data sources.

Candidates for this exam must be able to implement data solutions that use the following Azure services: Azure Cosmos DB, Azure SQL Database, Azure SQL Data Warehouse, Azure Data Lake Storage, Azure Data Factory, Azure Stream Analytics, Azure Databricks, and Azure Blob storage.

Skills measured

- Implement data storage solutions (40-45%)

- Manage and develop data processing (25-30%)

- Monitor and optimize data solutions (30-35%)

Latest updates Microsoft DP-200 exam practice questions

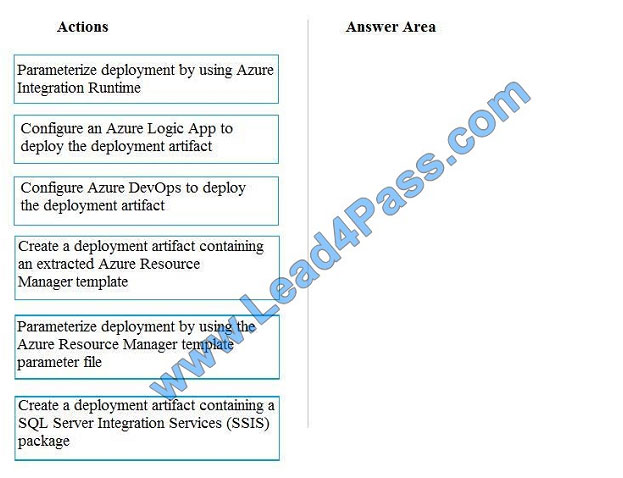

QUESTION 1

You need to ensure that phone-based polling data can be analyzed in the PollingData database.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions

to the answer are and arrange them in the correct order.

Select and Place:

Correct Answer:

All deployments must be performed by using Azure DevOps. Deployments must use templates used in multiple

environments No credentials or secrets should be used during deployments

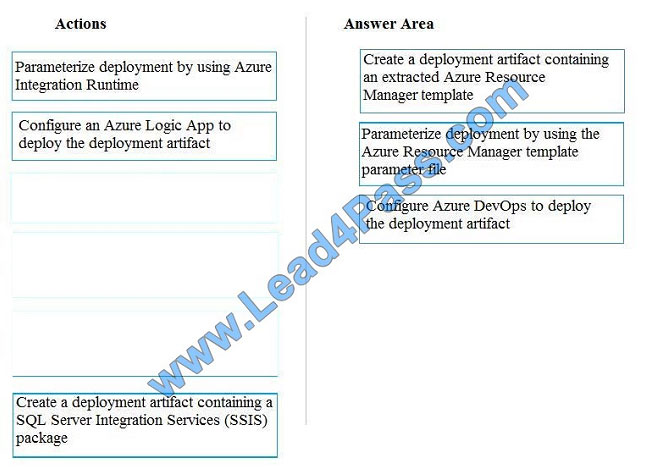

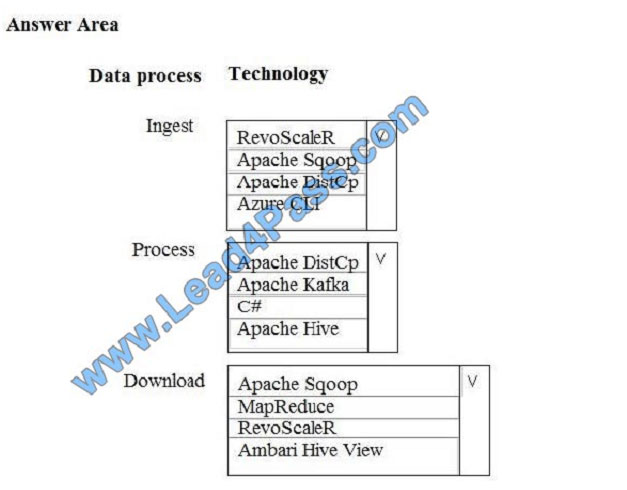

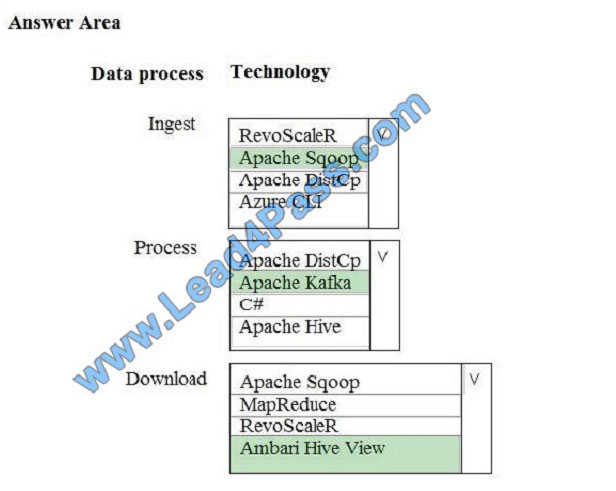

QUESTION 2

A company is deploying a service-based data environment. You are developing a solution to process this data.

The solution must meet the following requirements:

Use an Azure HDInsight cluster for data ingestion from a relational database in a different cloud service

Use an Azure Data Lake Storage account to store processed data

Allow users to download processed data

You need to recommend technologies for the solution.

Which technologies should you use? To answer, select the appropriate options in the answer area.

Hot Area:

Correct Answer:

Box 1: Apache Sqoop

Apache Sqoop is a tool designed for efficiently transferring bulk data between Apache Hadoop and structured

datastores such as relational databases.

Azure HDInsight is a cloud distribution of the Hadoop components from the Hortonworks Data Platform (HDP).

Incorrect Answers:

DistCp (distributed copy) is a tool used for large inter/intra-cluster copying. It uses MapReduce to effect its distribution,

error handling and recovery, and reporting. It expands a list of files and directories into input to map tasks, each of

which

will copy a partition of the files specified in the source list. Its MapReduce pedigree has endowed it with some quirks in

both its semantics and execution.

RevoScaleR is a collection of proprietary functions in Machine Learning Server used for practicing data science at scale.

For data scientists, RevoScaleR gives you data-related functions for import, transformation and manipulation,

summarization, visualization, and analysis.

Box 2: Apache Kafka

Apache Kafka is a distributed streaming platform.

A streaming platform has three key capabilities:

Publish and subscribe to streams of records, similar to a message queue or enterprise messaging system.

Store streams of records in a fault-tolerant durable way.

Process streams of records as they occur.

Kafka is generally used for two broad classes of applications:

Building real-time streaming data pipelines that reliably get data between systems or applications

Building real-time streaming applications that transform or react to the streams of data

Box 3: Ambari Hive View

You can run Hive queries by using Apache Ambari Hive View. The Hive View allows you to author, optimize, and run

Hive queries from your web browser.

References:

https://sqoop.apache.org/

https://kafka.apache.org/intro

https://docs.microsoft.com/en-us/azure/hdinsight/hadoop/apache-hadoop-use-hive-ambari-view

QUESTION 3

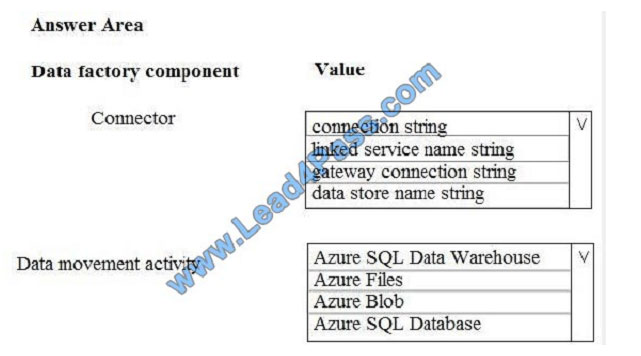

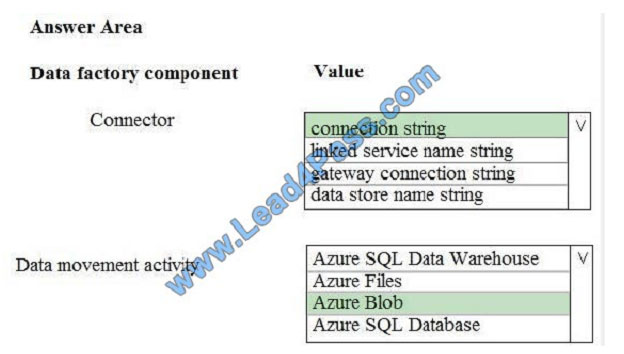

You need set up the Azure Data Factory JSON definition for Tier 10 data.

What should you use? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is

worth one point.

Hot Area:

Correct Answer:

Box 1: Connection String

To use storage account key authentication, you use the ConnectionString property, which xpecify the information

needed to connect to Blobl Storage.

Mark this field as a SecureString to store it securely in Data Factory. You can also put account key in Azure Key Vault

and pull the accountKey configuration out of the connection string.

Box 2: Azure Blob

Tier 10 reporting data must be stored in Azure Blobs

References: https://docs.microsoft.com/en-us/azure/data-factory/connector-azure-blob-storage

QUESTION 4

You manage a financial computation data analysis process. Microsoft Azure virtual machines (VMs) run the process in

daily jobs, and store the results in virtual hard drives (VHDs.)

The VMs product results using data from the previous day and store the results in a snapshot of the VHD. When a new

month begins, a process creates a new VHD.

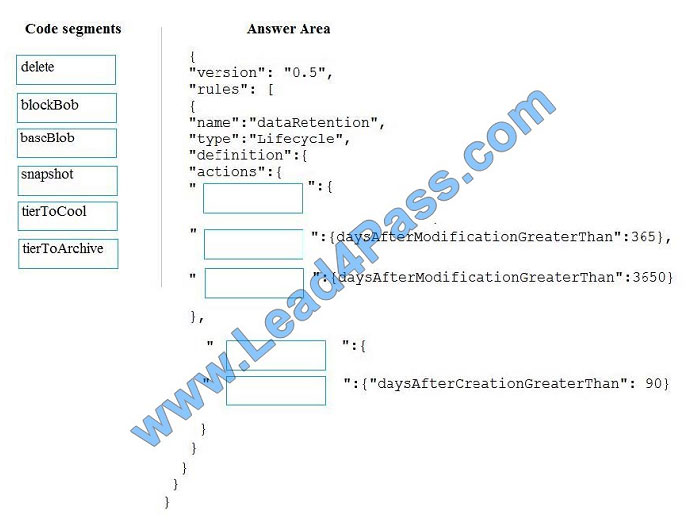

You must implement the following data retention requirements:

Daily results must be kept for 90 days Data for the current year must be available for weekly reports Data from the

previous 10 years must be stored for auditing purposes Data required for an audit must be produced within 10 days of a

request.

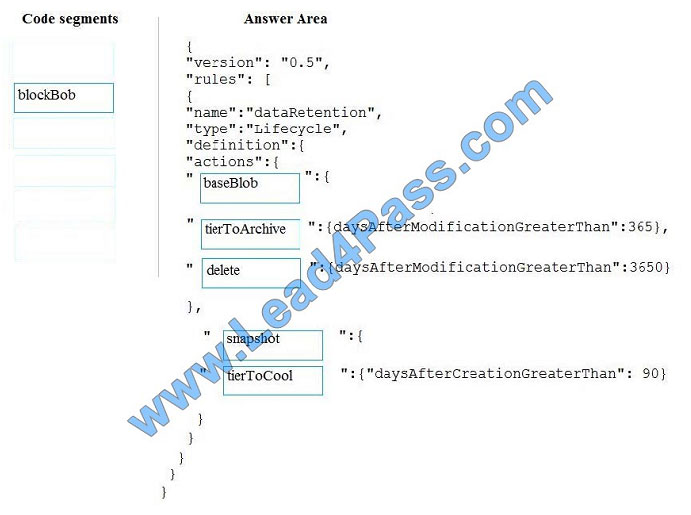

You need to enforce the data retention requirements while minimizing cost.

How should you configure the lifecycle policy? To answer, drag the appropriate JSON segments to the correct locations.

Each JSON segment may be used once, more than once, or not at all. You may need to drag the split bat between

panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Select and Place:

Correct Answer:

The Set-AzStorageAccountManagementPolicy cmdlet creates or modifies the management policy of an Azure Storage

account.

Example: Create or update the management policy of a Storage account with ManagementPolicy rule objects.

Action -BaseBlobAction Delete -daysAfterModificationGreaterThan 100 PS C:\>$action1 = Add-

AzStorageAccountManagementPolicyAction -InputObject $action1 -BaseBlobAction TierToArchive

-daysAfterModificationGreaterThan 50 PS C:\>$action1 = Add-AzStorageAccountManagementPolicyAction -InputObject

$action1 -BaseBlobAction TierToCool -daysAfterModificationGreaterThan 30 PS C:\>$action1 = Add-

AzStorageAccountManagementPolicyAction -InputObject $action1 -SnapshotAction Delete

-daysAfterCreationGreaterThan 100 PS C:\>$filter1 = New-AzStorageAccountManagementPolicyFilter -PrefixMatch

ab,cd PS C:\>$rule1 = New-AzStorageAccountManagementPolicyRule -Name Test -Action $action1 -Filter $filter1

PS C:\>$action2 = Add-AzStorageAccountManagementPolicyAction -BaseBlobAction Delete

-daysAfterModificationGreaterThan 100 PS C:\>$filter2 = New-AzStorageAccountManagementPolicyFilter

References: https://docs.microsoft.com/en-us/powershell/module/az.storage/set-azstorageaccountmanagementpolicy

QUESTION 5

Note: This question is part of series of questions that present the same scenario. Each question in the series contains a

unique solution. Determine whether the solution meets the stated goals.

You develop a data ingestion process that will import data to a Microsoft Azure SQL Data Warehouse. The data to be

ingested resides in parquet files stored in an Azure Data Lake Gen 2 storage account.

You need to load the data from the Azure Data Lake Gen 2 storage account into the Azure SQL Data Warehouse.

Solution:

1.

Create an external data source pointing to the Azure storage account

2.

Create a workload group using the Azure storage account name as the pool name

3.

Load the data using the INSERT…SELECT statement Does the solution meet the goal?

A. Yes

B. No

Correct Answer: B

You need to create an external file format and external table using the external data source. You then load the data

using the CREATE TABLE AS SELECT statement.

References: https://docs.microsoft.com/en-us/azure/sql-data-warehouse/sql-data-warehouse-load-from-azure-data-lake-store

QUESTION 6

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains

a unique solution that might meet the stated goals. Some questions sets might have more than one correct solution,

while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not

appear in the review screen.

You need setup monitoring for tiers 6 through 8.

What should you configure?

A. extended events for average storage percentage that emails data engineers

B. an alert rule to monitor CPU percentage in databases that emails data engineers

C. an alert rule to monitor CPU percentage in elastic pools that emails data engineers

D. an alert rule to monitor storage percentage in databases that emails data engineers

E. an alert rule to monitor storage percentage in elastic pools that emails data engineers

Correct Answer: E

Scenario:

Tiers 6 through 8 must have unexpected resource storage usage immediately reported to data engineers.

Tier 3 and Tier 6 through Tier 8 applications must use database density on the same server and Elastic pools in a cost-

effective manner.

QUESTION 7

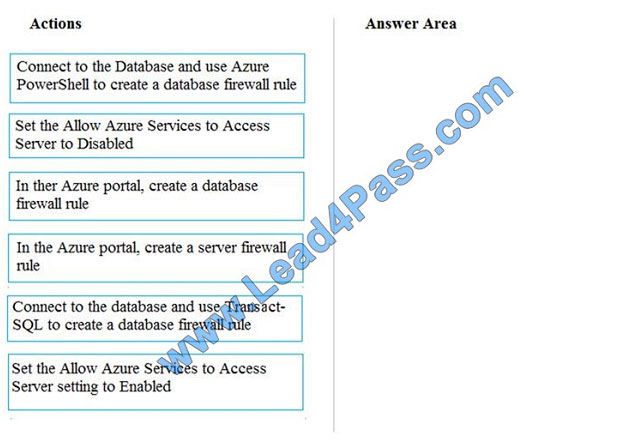

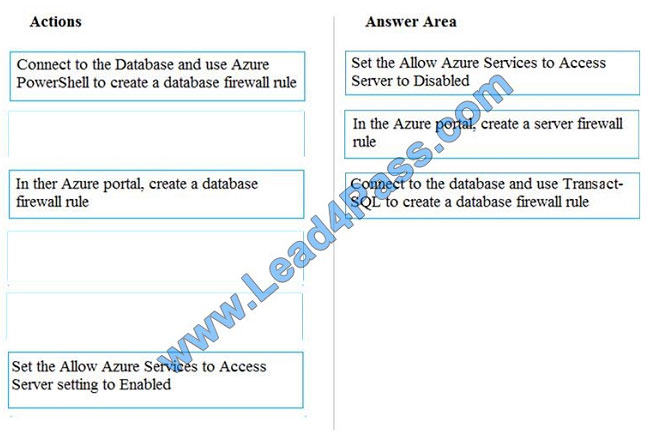

You need to set up access to Azure SQL Database for Tier 7 and Tier 8 partners.

Which three actions should you perform in sequence? To answer, move the appropriate three actions from the list of

actions to the answer area and arrange them in the correct order.

Select and Place:

Correct Answer:

Tier 7 and 8 data access is constrained to single endpoints managed by partners for access

Step 1: Set the Allow Azure Services to Access Server setting to Disabled

Set Allow access to Azure services to OFF for the most secure configuration.

By default, access through the SQL Database firewall is enabled for all Azure services, under Allow access to Azure

services. Choose OFF to disable access for all Azure services.

Note: The firewall pane has an ON/OFF button that is labeled Allow access to Azure services. The ON setting allows

communications from all Azure IP addresses and all Azure subnets. These Azure IPs or subnets might not be owned

by

you. This ON setting is probably more open than you want your SQL Database to be. The virtual network rule feature

offers much finer granular control.

Step 2: In the Azure portal, create a server firewall rule

Set up SQL Database server firewall rules

Server-level IP firewall rules apply to all databases within the same SQL Database server.

To set up a server-level firewall rule:

In Azure portal, select SQL databases from the left-hand menu, and select your database on the SQL databases page.

On the Overview page, select Set server firewall. The Firewall settings page for the database server opens.

Step 3: Connect to the database and use Transact-SQL to create a database firewall rule

Database-level firewall rules can only be configured using Transact-SQL (T-SQL) statements, and only after you\\’ve

configured a server-level firewall rule.

To setup a database-level firewall rule:

Connect to the database, for example using SQL Server Management Studio.

In Object Explorer, right-click the database and select New Query.

In the query window, add this statement and modify the IP address to your public IP address:

EXECUTE sp_set_database_firewall_rule N\\’Example DB Rule\\’,\\’0.0.0.4\\’,\\’0.0.0.4\\’;

On the toolbar, select Execute to create the firewall rule.

References:

https://docs.microsoft.com/en-us/azure/sql-database/sql-database-security-tutorial

QUESTION 8

You are the data engineer for your company. An application uses a NoSQL database to store data. The database uses

the key-value and wide-column NoSQL database type.

Developers need to access data in the database using an API.

You need to determine which API to use for the database model and type.

Which two APIs should you use? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

A. Table API

B. MongoDB API

C. Gremlin API

D. SQL API

E. Cassandra API

Correct Answer: BE

B: Azure Cosmos DB is the globally distributed, multimodel database service from Microsoft for mission-critical

applications. It is a multimodel database and supports document, key-value, graph, and columnar data models.

E: Wide-column stores store data together as columns instead of rows and are optimized for queries over large

datasets. The most popular are Cassandra and HBase.

References: https://docs.microsoft.com/en-us/azure/cosmos-db/graph-introduction

https://www.mongodb.com/scale/types-of-nosql-databases

QUESTION 9

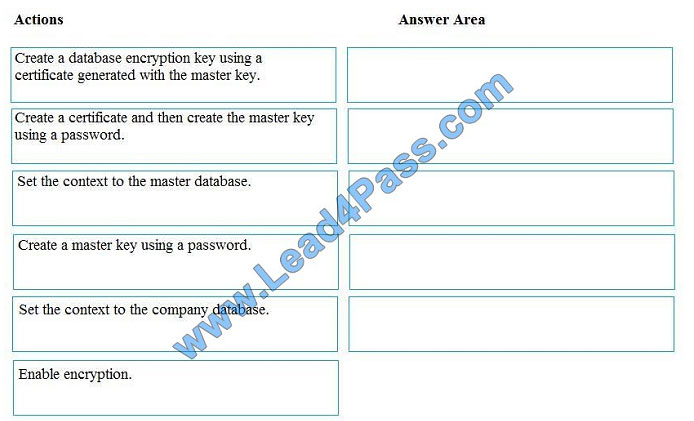

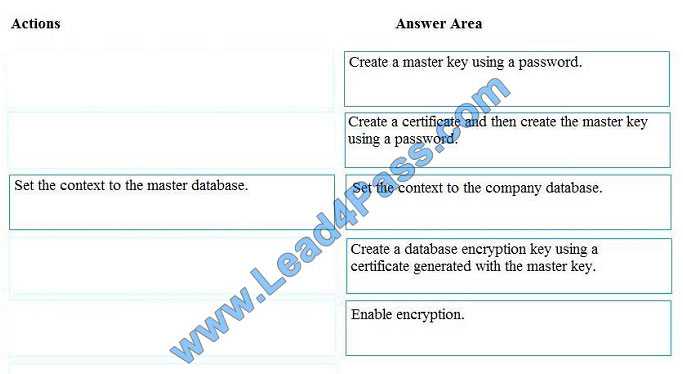

You manage security for a database that supports a line of business application.

Private and personal data stored in the database must be protected and encrypted.

You need to configure the database to use Transparent Data Encryption (TDE).

Which five actions should you perform in sequence? To answer, select the appropriate actions from the list of actions to

the answer area and arrange them in the correct order.

Select and Place:

Correct Answer:

Step 1: Create a master key

Step 2: Create or obtain a certificate protected by the master key

Step 3: Set the context to the company database

Step 4: Create a database encryption key and protect it by the certificate

Step 5: Set the database to use encryption

Example code: USE master; GO CREATE MASTER KEY ENCRYPTION BY PASSWORD = \\’\\’; go CREATE

CERTIFICATE MyServerCert WITH SUBJECT = \\’My DEK Certificate\\’; go USE AdventureWorks2012; GO CREATE

DATABASE ENCRYPTION KEY WITH ALGORITHM = AES_128 ENCRYPTION BY SERVER CERTIFICATE

MyServerCert; GO ALTER DATABASE AdventureWorks2012 SET ENCRYPTION ON; GO

References: https://docs.microsoft.com/en-us/sql/relational-databases/security/encryption/transparent-data-encryption

QUESTION 10

Note: This question is part of series of questions that present the same scenario. Each question in the series contains a

unique solution. Determine whether the solution meets the stated goals.

You develop a data ingestion process that will import data to a Microsoft Azure SQL Data Warehouse. The data to be

ingested resides in parquet files stored in an Azure Data Lake Gen 2 storage account.

You need to load the data from the Azure Data Lake Gen 2 storage account into the Azure SQL Data Warehouse.

Solution:

1.

Create an external data source pointing to the Azure storage account

2.

Create an external file format and external table using the external data source

3.

Load the data using the INSERT…SELECT statement Does the solution meet the goal?

A. Yes

B. No

Correct Answer: B

You load the data using the CREATE TABLE AS SELECT statement.

References: https://docs.microsoft.com/en-us/azure/sql-data-warehouse/sql-data-warehouse-load-from-azure-data-lake-store

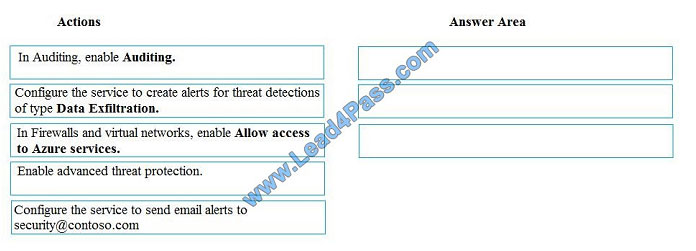

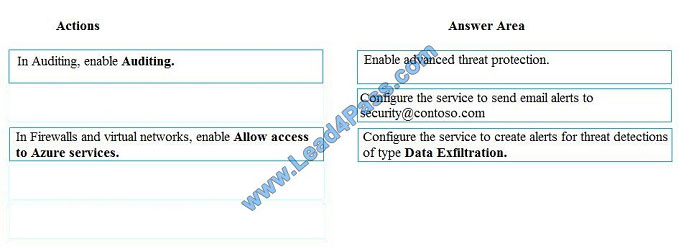

QUESTION 11

A company uses Microsoft Azure SQL Database to store sensitive company data. You encrypt the data and only allow

access to specified users from specified locations.

You must monitor data usage, and data copied from the system to prevent data leakage.

You need to configure Azure SQL Database to email a specific user when data leakage occurs.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions

to the answer area and arrange them in the correct order.

Select and Place:

Correct Answer:

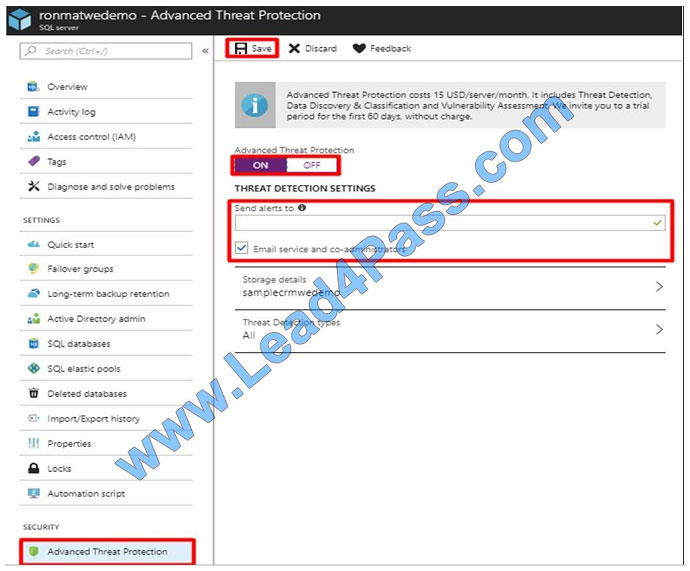

Step 1: Enable advanced threat protection Set up threat detection for your database in the Azure portal

1. Launch the Azure portal at https://portal.azure.com.

2. Navigate to the configuration page of the Azure SQL Database server you want to protect. In the security settings,

select Advanced Data Security.

3. On the Advanced Data Security configuration page:

Enable advanced data security on the server.

In Threat Detection Settings, in the Send alerts to text box, provide the list of emails to receive security alerts upon

detection of anomalous database activities.

Step 2: Configure the service to send email alerts to [email protected]

Step 3:..of type data exfiltration

The benefits of Advanced Threat Protection for Azure Storage include:

Detection of anomalous access and data exfiltration activities.

Security alerts are triggered when anomalies in activity occur: access from an unusual location, anonymous access,

access by an unusual application, data exfiltration, unexpected delete operations, access permission change, and so

on.

Admins can view these alerts via Azure Security Center and can also choose to be notified of each of them via email.

References:

https://docs.microsoft.com/en-us/azure/sql-database/sql-database-threat-detection

https://www.helpnetsecurity.com/2019/04/04/microsoft-azure-security/

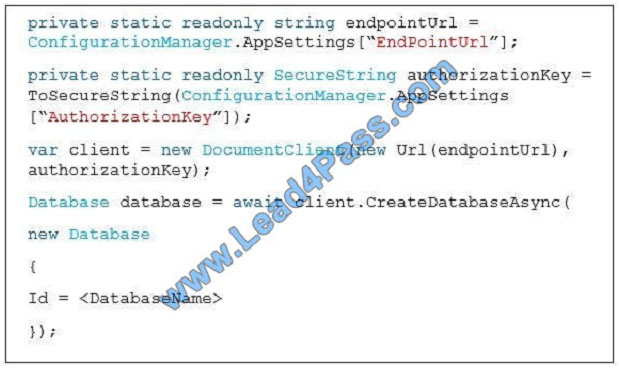

You develop data engineering solutions for a company. An application creates a database on Microsoft Azure. You have

the following code:

QUESTION 12

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains

a unique solution that might meet the stated goals. Some questions sets might have more than one correct solution,

while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not

appear in the review screen.

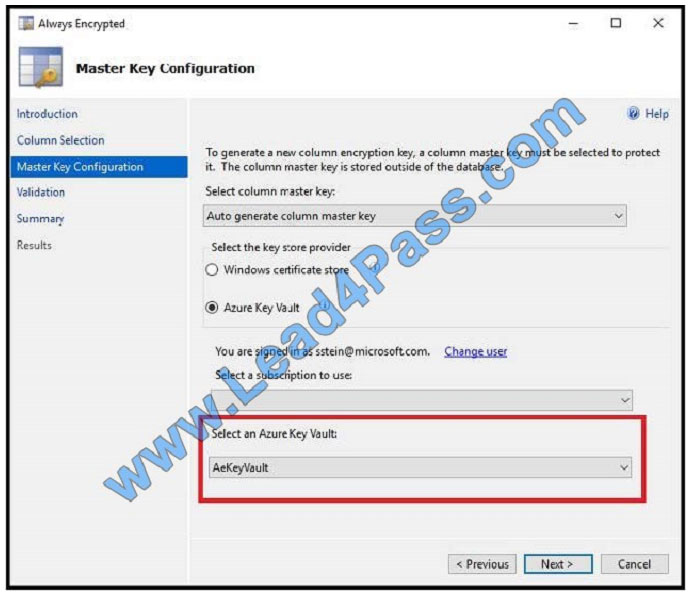

You need to configure data encryption for external applications.

Solution:

1.

Access the Always Encrypted Wizard in SQL Server Management Studio

2.

Select the column to be encrypted

3.

Set the encryption type to Randomized

4.

Configure the master key to use the Windows Certificate Store

5.

Validate configuration results and deploy the solution

Does the solution meet the goal?

A. Yes

B. No

Correct Answer: B

Use the Azure Key Vault, not the Windows Certificate Store, to store the master key.

Note: The Master Key Configuration page is where you set up your CMK (Column Master Key) and select the key store

provider where the CMK will be stored. Currently, you can store a CMK in the Windows certificate store, Azure Key

Vault, or a hardware security module (HSM).

References: https://docs.microsoft.com/en-us/azure/sql-database/sql-database-always-encrypted-azure-key-vault

QUESTION 13

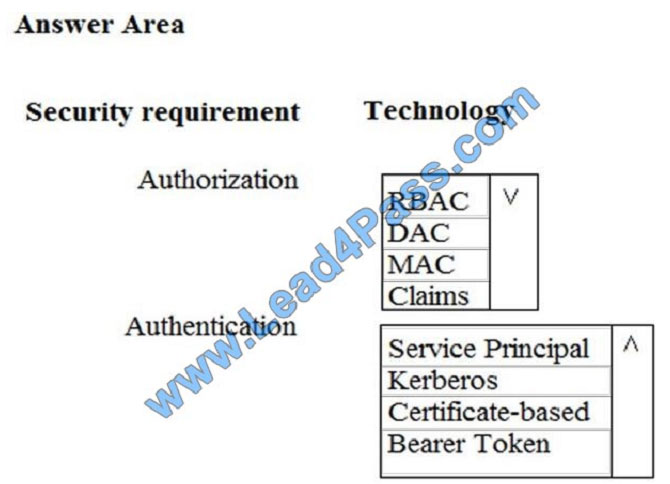

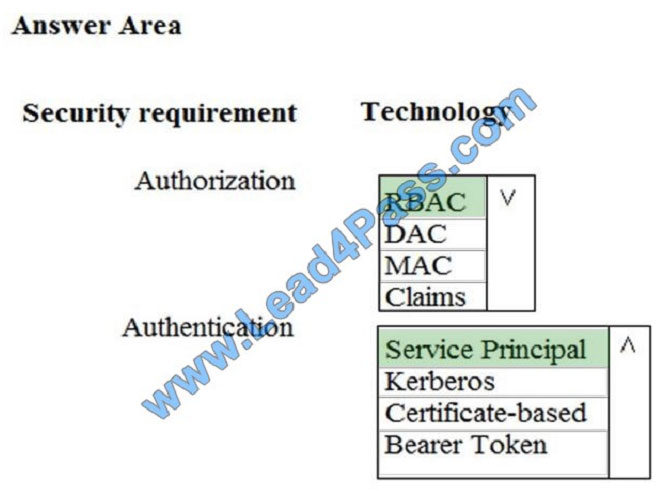

You need to ensure that Azure Data Factory pipelines can be deployed. How should you configure authentication and

authorization for deployments? To answer, select the appropriate options in the answer choices. NOTE: Each correct

selection is worth one point.

Hot Area:

Correct Answer:

The way you control access to resources using RBAC is to create role assignments. This is a key concept to understand

–it\\’s how permissions are enforced. A role assignment consists of three elements: security principal, role definition,

and

scope.

Scenario:

No credentials or secrets should be used during deployments

Phone-based poll data must only be uploaded by authorized users from authorized devices

Contractors must not have access to any polling data other than their own

Access to polling data must set on a per-active directory user basis

References:

https://docs.microsoft.com/en-us/azure/role-based-access-control/overview

Related DP-200 Popular Exam resources

leads4pass Year-round Discount Code

What are the advantages of leads4pass?

leads4pass employs the most authoritative exam specialists from Microsoft, Cisco, CompTIA, IBM, EMC, etc. We update exam data throughout the year. Highest pass rate! We have a large user base. We are the industry leader! Choose leads4pass to pass the exam with ease!

Summarize:

It’s not easy to pass the Microsoft DP-200 exam, but with accurate learning materials and proper practice, you can crack the exam with excellent results. leads4pass.com provides you with the most relevant learning materials that you can use to help you prepare.